A Stanford Egyetem kutatói által a múlt héten közzétett tanulmány rámutat arra, hogy a GPT-4 és más csúcstechnológiás mesterséges intelligencia rendszerek titkai milyen mélyek és potenciálisan veszélyesek lehetnek.

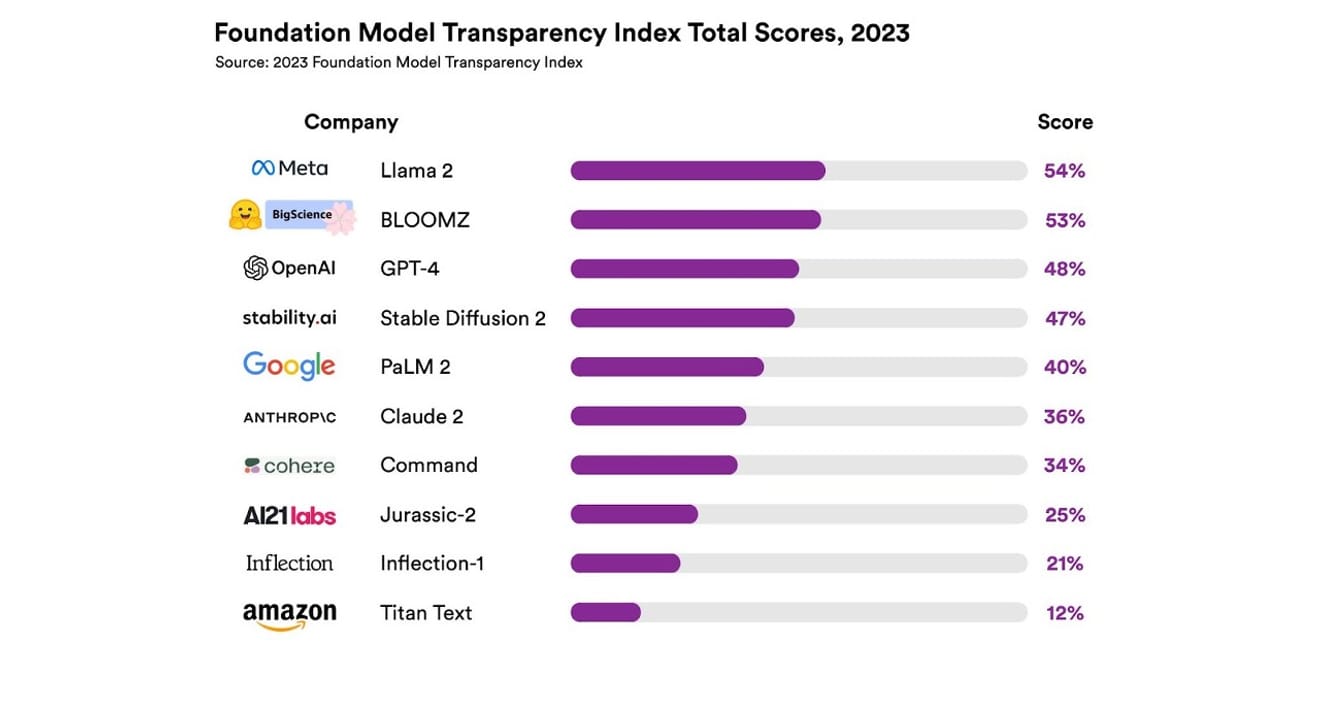

A Foundation Model Transparency Index bemutatása, Stanford Egyetem

Összesen 10 különböző mesterséges intelligencia rendszert vizsgáltak meg, amelyek többsége nagyméretű nyelvi modell volt, hasonlóan a ChatGPT és más chatbotokhoz. Ide tartoznak a széles körben használt kereskedelmi modellek, például az OpenAI GPT-4, a Google PaLM 2 és az Amazon Titan Text, és 13 kritérium alapján értékelték az átláthatóságukat, beleértve azt is, hogy a fejlesztők milyen átláthatóan tárták fel a modellek betanításához használt adatokat (az adatgyűjtés és -címkézés módja, a szerzői joggal védett anyagok szerepeltetése stb.). Vizsgálták azt is, hogy nyilvánosságra hozták-e a modell betanításához és futtatásához használt hardvert, a használt szoftveres keretrendszert és a projekt energiafogyasztását.

Az eredmény az volt, hogy egyetlen mesterséges intelligencia modell sem érte el az 54%-ot az átláthatósági skálán az összes említett kritérium tekintetében. Összességében az Amazon Titan Text bizonyult a legkevésbé átláthatónak, míg a Meta Llama 2 a legnyíltabbnak. Érdekes módon a Llama 2, amely a nyílt és zárt modellek közötti közelmúltbeli vita központi témája, és nyílt forráskódú modell, nem hozta nyilvánosságra a betanításhoz használt adatokat, az adatgyűjtés és kurátori módszereket. Ez azt jelenti, hogy a mesterséges intelligencia társadalmunkra gyakorolt hatásának növekedése ellenére az ágazat átláthatatlansága általános és tartós jelenség.

Ez azt jelenti,hogy a mesterséges intelligencia ágazat hamarosan a tudományos fejlődés helyett a profitorientált szektor részévé válhat, és ez a jövőben egy adott vállalat által dominált monopolhelyzethez vezethet.

Eric Lee/Bloomberg via Getty Images

Az OpenAI vezérigazgatója, Sam Altman már találkozott a világ minden tájáról érkező döntéshozókkal, és nyíltan kifejezte szándékát, hogy tájékoztassa őket erről az új és ismeretlen intelligenciáról, és segítsen a kapcsolódó szabályozás kidolgozásában. Bár elvileg támogatja a mesterséges intelligencia felügyeletére szolgáló nemzetközi szervezet gondolatát, úgy véli, hogy egyes korlátozó szabályok, például az adatállományokból származó szerzői joggal védett anyagok tiltása tisztességtelen akadályt jelenthetnek. Az OpenAI névben rejlő „nyitottság” egyértelműen eltávolodott attól a radikális átláthatóságtól, amelyet a cég alapítása óta képviselt.

A Stanford-jelentés eredményei azonban arra is rámutatnak, hogy a versenytársaktól való félelem miatt nincs szükség a modellek ilyen titkolózására. Az eredmények ugyanis azt mutatják, hogy szinte minden vállalat gyengén teljesít ezen a téren. Például egyetlen vállalat sem ad nyilvánosságra statisztikákat arról, hogy hány felhasználó használja a modelljét, vagy hogy melyik régióban vagy piaci szegmensben használják a modelljüket.

A nyílt forráskódú elveket valló szervezetekben van egy közmondás: „Sok szem elől nem vész el a hiba.” (Linus's law). A nyers számok segítenek a problémák azonosításában és javításában.

De anyílt forráskódú gyakorlatok egyre inkább hajlamosak aláásni a nyilvánosan működő vállalatok társadalmi státuszát és elismerését mind a vállalat belsejében, mind kívül, ezért a feltétel nélküli hangsúlyozásnak nincs sok értelme. Ezért jobb, ha nem a modell nyílt vagy zárt jellegére koncentrálunk, hanem arra, hogyfokozatosan bővítsük a külső hozzáférést az AI-modellek alapját képező „adatokhoz”.

A tudományos fejlődéshez fontos aszaporíthatóság (Reproducibility) biztosítása, azaz hogy egy adott kutatási eredmény újból előállítható-e. Ha nem konkretizáljuk az egyes modellek létrehozásának fő összetevőire vonatkozó átláthatóságot, akkor az ágazat valószínűleg zárt és stagnáló monopolhelyzetben marad. Ezt szem előtt kell tartanunk, mivel a mesterséges intelligencia technológia gyorsan terjed az iparágakban, és ez a jövőben is fennmarad. Ez egy nagyon fontos prioritás, amelyet figyelembe kell vennünk.

A tudósítóknak és a kutatóknak egyre fontosabb, hogy megértsék az adatokat, a döntéshozók számára pedig az átláthatóság az előre tervezett politikai törekvések alapfeltétele. A nyilvánosság számára is fontos az átláthatóság, mivel a mesterséges intelligencia rendszerek végfelhasználóiként a szellemi tulajdonjog, az energiafogyasztás és az elfogultsággal kapcsolatos potenciális problémák tettesévé vagy áldozatává válhatnak. Sam Altman azt állítja, hogy a mesterséges intelligencia által okozott kihalási kockázatnak a globális prioritások közé kellene tartozni, hasonlóan a járványokhoz vagy az atomháborúhoz. De mielőtt eljutnánk ehhez a veszélyes helyzethez, nem szabad megfeledkeznünk arról, hogy a mesterséges intelligenciával való egészséges kapcsolat fenntartása társadalmunk fennmaradásának feltétele.

*Ez a cikk a 2023. október 23-i Elektronikus Újság névtelen szerzői cikkének eredeti változata.

Hivatkozások

Hozzászólások0