Recently, just a few months after the launch of ChatGPT, a tremendous drama unfolded within the board of OpenAI, a non-profit organization that grew from nothing to $1 billion in annual revenue. Sam Altman, the company's CEO, was fired, and after his announced departure to Microsoft, his reinstatement as OpenAI's CEO was decided. Typically, founding CEOs hold the most powerful position in a company, making it extremely rare for a board to fire them, especially in a giant company with a valuation of $80 billion.

However, this intense 5-day drama was possible due to OpenAI's unique structure, bound by its mission statement of 'for humanity'. The three independent board members who reportedly spearheaded Altman's dismissal were all connected to effective altruism (EA), a philosophy aligned with the company's goal of ensuring 'the survival of humanity and all of observable universe.'

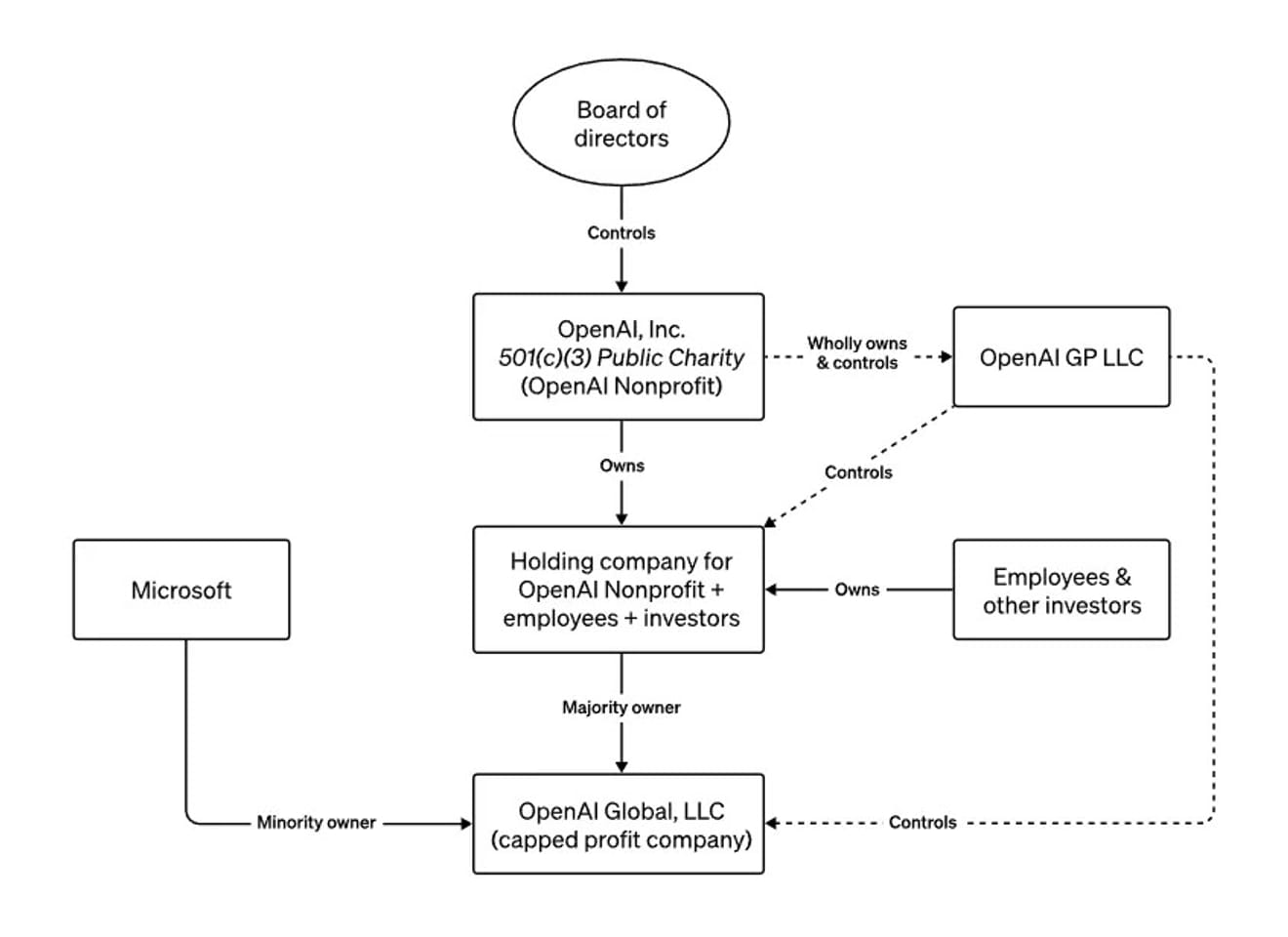

the board structure of OpenAI

Throughout the year, Altman has toured the globe, warning the media and governments about the existential risks posed by the technology he is developing. He has described OpenAI's unusual non-profit within a for-profit structure as a bellwether against the reckless development of powerful AI. In a June interview with Bloomberg, he mentioned that if he acted recklessly or in a way that goes against humanity's best interests, the board could remove him. In other words, it was a structure intentionally designed to allow a board that prioritizes safety over profits, particularly concerning the emergence of uncontrollable AGI, to fire the CEO at any time.

So, how should we view the current situation where Open AI's new CEO is the same as its previous CEO?

It's difficult to simply conclude this as a non-event because it reveals that decisions related to the development of ethical artificial intelligence, which has the potential to significantly impact our society, are made by a very small group of individuals. Sam Altman has become a symbol of our times, where the world's attention is focused on AI development and regulation. And we've witnessed the process by which the virtually only external means of potentially hindering his judgments and decisions was essentially discarded, highlighting the importance of additional external mechanisms in the future.

Furthermore, this incident has clarified the positions and interpretations of various groups: doomsayers who worry that AI will destroy humanity, transhumanists who believe technology will accelerate a utopian future, those who believe in free-market capitalism, and proponents of strict regulations for big tech companies who believe a balance between the potential harms of powerful disruptive technologies and the desire for profit cannot be achieved. This all stems from a fear of a future where AI and humans coexist, prompting the need for a more diverse set of communities capable of predicting this future.

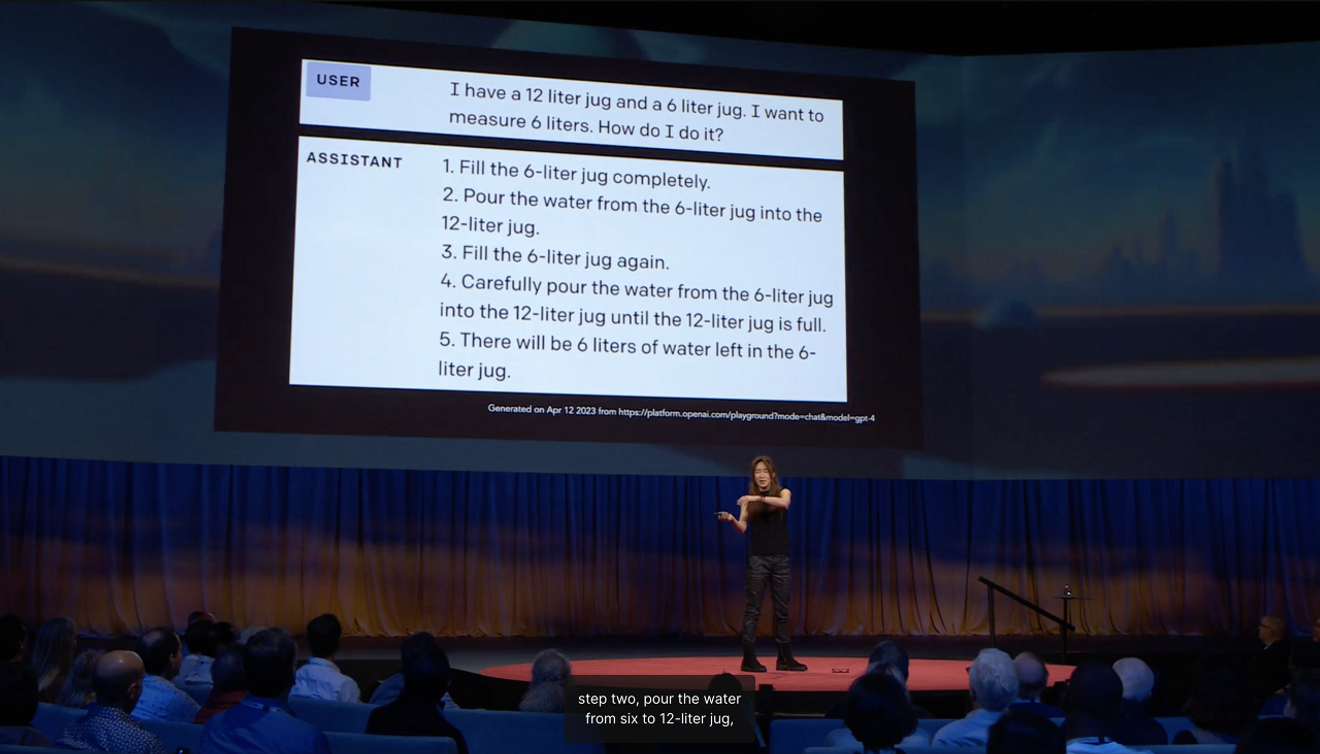

Ye Jin Choi, a professor at the University of Washington who has been included in the list of the 100 most influential people in the world's AI field, explained in her TED Talk that the reason why AI that can pass various national examinations adds unnecessary steps when measuring the amount of 6 liters of water using a 12-liter and a 6-liter kettle is due toa lack of common sense learning acquired through human social interaction.She explained.

When predicting the future, we often identify something new from an outsider's perspective, using 'boundaries' that indicate the direction of the mainstream. In this case, what appears to be a stable vision of the future from an external perspective is always, to some extent, anexpectation abstracted from ‘vivid experience’ based on the present.Arjun Appadurai, an American anthropologist, argued that imagination is a social practice rather than a private, individual capacity. This implies that various imaginations of the future can manifest in reality, and this incident can be interpreted as one landscape created by the imaginations related to the uncertain future of AGI.

Now that we have confirmed that the expectations of industry leaders regarding the future have significant political implications, we will need a deeper understanding of the futures that are collectively imagined and constructed within diverse social and cultural contexts when it comes to deciding the future of AI. It's time to ask how we can create opportunities to actively present collective expectations based on vivid experiences within various communities.

References

Comments0